Tonight was robot & games night at Edmonton’s 31st DemoCamp which took place at the Centennial Centre for Interdisciplinary Sciences (CCIS) on the University of Alberta campus. After missing the last two, it was great to be back to see some inspiring new projects and entrepreneurs. You can read my recap of DemoCamp Edmonton 29 here.

If you’re new to DemoCamp, here’s what it’s all about:

“DemoCamp brings together developers, creatives, entrepreneurs and investors to share what they’ve been working on and to find others in the community interested in similar topics. For presenters, it’s a great way to get feedback on what you’re building from peers and the community, all in an informal setting. Started back in 2008, DemoCamp Edmonton has steadily grown into one of the largest in the country, with over 200 people attending each event. The rules for DemoCamp are simple: 7 minutes to demo real, working products, followed by a few minutes for questions, and no slides allowed.”

In order of appearance, tonight’s demos included:

- Bento Arm (PDF)

- vrNinja

- Anthrobotics

- Hugo

- RunGunJumpGun

Rory & Jaden showed us the latest version of Bento Arm, a 3D printed robotic arm. It features pressure sensors in the finger tips, servo motors that track velocity and other metrics, potentiometers, and even includes a camera embedded in the palm. The idea with having all of those sensors is to use machine learning to improve its capabilities over time (for instance the camera might recognize objects to help the arm pick them up). The demo showed how the hand could be controlled using a joystick, moving the arm around, and opening and closing the fingers. Bento Arm runs on the Robot Operating System and the team plans to open source everything, hardware and software. To the end the demo, they played rock-paper-scissors against the Bento Arm, which won. Welcome to the future!

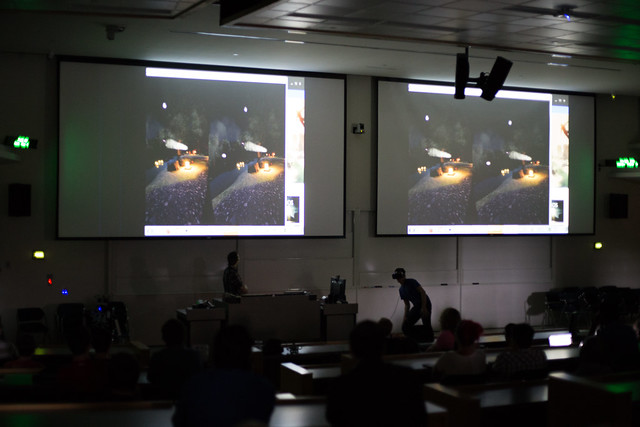

Nathaniel & Alexendar were up next and they showed us vrNinja, a ninja simulation game built for the Oculus Rift VR headset. In the game you are a ninja and you must learn and use new weapons as things get faster and faster. The game features positional audio and requires you to move quite a bit in order to play (so be careful what’s next to you). The team are hoping to release it in the Oculus store in the next month or so, and they have plans to look into the HTC Vive VR headset as well. If you’d like a closer look, you can check out the game this weekend at GDX Edmonton.

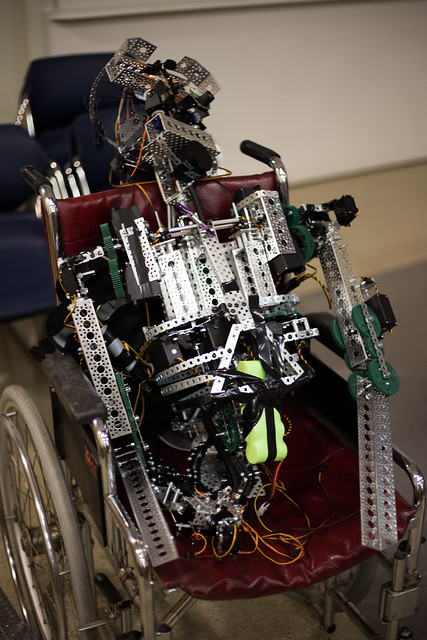

Next, Ian & Evan showed us what they have been working on with Anthrobotics. The idea is to build robots that do all the boring, redundant tasks that we all need to do each day. They showed three prototypes. The first was an anthropomorphic named Robio who sat in a wheelchair. Unfortunately the demo gods got the better of him and the speech demo didn’t work. They said they liked the humanoid form (even though it is difficult to build) because they think it has the greatest potential for being useful in our world. The next two prototypes were a hand that featured and opposable thumb and a leg that could move both entirely and just the foot. They are using Arduino boards right now but have plans to add Raspberry Pis in the future. Their robots are very much in the prototype stage, but if this is what they’re doing in high school, I can’t wait to see what they build in the future!

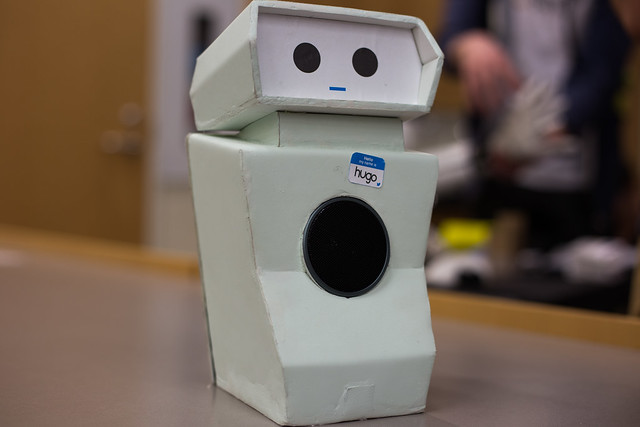

Hugo, the Twitter-powered robot

Jeff and couple of his colleagues from Paper Leaf were up next to show us Hugo, the Twitter-powered robot that you probably tweeted inappropriate things to last year when it launched. The way it works is you tweet something with the hashtag #hugorobot and Hugo will speak it aloud. You can read more about Hugo here. Hugo was a big success, and even helped Paper Leaf to win an ACE Award. At the experiment’s peak, Hugo was receiving 3100 tweets per hour and more than 7000 people watched the livestream. Hugo was posted to Reddit, 4chan, and 9gag, all of which meant that the team had to work hard to keep the blacklist updated. It’s a fun project and Jeff says you could apply the same concepts of social media and crowdsourcing elsewhere.

Our final demo of the evening was from Matt & Logan who showed us RunGunJumpGun. It’s a 2D side-scrolling “helicopter-style” game that they first prototyped at least year’s GDX Edmonton. Now a year later, they have improved and refined the game, and plan to release it this summer. The game features 40 levels that increase along a difficulty curve so that as you progress you should master the skills needed to win. Though honestly the last level looked impossible to pass! There’s a certain amount of frustration that comes along with the style of play, but it also has a high degree of replay-ability. They plan to launch an iPhone version at some point too.

Some upcoming events to note:

- Monthly Hack Day is coming up this Saturday at Startup Edmonton

- GDX Edmonton takes place Saturday and Sunday at the Robbins Health Learning Centre downtown

- Preflight Beta takes place Tuesday at Startup Edmonton and “helps founders and product builders experiment and validate a scalable product idea”

- The full Preflight program started today!

- The next ROS Robotics Meetup takes place on May 19 at Startup Edmonton

Over 150 meetup events took place at Startup Edmonton last year! Keep an eye on the Startup Edmonton Meetup group for more upcoming events. They have also added a listing of all the meetups taking place at Startup to the website. You can also follow them on Twitter.

See you at DemoCamp Edmonton 32!

Maybe the title should say “finished” instead of “won”, as DARPA’s race for robots has never before been completed. At least three robots have now

Maybe the title should say “finished” instead of “won”, as DARPA’s race for robots has never before been completed. At least three robots have now  We were really fortunate to meet Phillip Torrone at Gnomedex, and to have the opportunity to chat with him about the Podbot. He’s got a new entry up in the MAKE: Blog

We were really fortunate to meet Phillip Torrone at Gnomedex, and to have the opportunity to chat with him about the Podbot. He’s got a new entry up in the MAKE: Blog  As some of you may know, we’re in the process of building a robot. We’ve encountered our fair share of problems so far, specifically with regards to getting the damn thing to move. So I was particularly interested to read about graduate student Garnet Hertz and his solution for robotic movement:

As some of you may know, we’re in the process of building a robot. We’ve encountered our fair share of problems so far, specifically with regards to getting the damn thing to move. So I was particularly interested to read about graduate student Garnet Hertz and his solution for robotic movement: