Though he works in perhaps the most hyped field of science there is, Dr. Richard Sutton comes across as remarkably grounded. I heard him described at the 2018 AccelerateAB conference on Tuesday as “the Wayne Gretzky of artificial intelligence” and he’s often called a global pioneer in the field of AI. Sutton has spent 40 years researching AI and literally wrote the textbook on Reinforcement Learning. But he spent the first part of his closing keynote discussing the tension between ambition and humility. “It’s good to be ambitious,” he told the audience tentatively. “I’m keen on the idea of Alberta being a pioneer in AI.” But he tempered that by discussing the risk of ambition turning to arrogance and affecting the work of a scientist.

“I think you should say whatever strong thing is true,” he said. Then: “Edmonton is a world leader in the science of AI.”

Sutton made sure to highlight the word “science” and noted that we fall behind when it comes to the application of AI. And of course, he backed up his claim with sources, citing DeepMind’s decision to open an international AI research office here at the University of Alberta, and pointing to the csrankings.org site which ranks the U of A at #2 in the world for artificial intelligence and machine learning.

So how did Edmonton come to be such a leader?

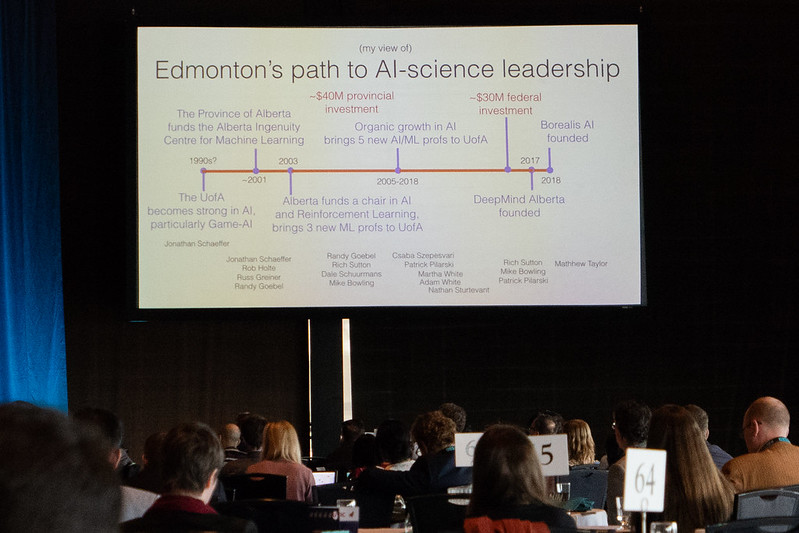

It started with Jonathan Schaeffer’s work in the 1990s on Chinook, the first computer program to win the world champion title in checkers. The U of A’s growing expertise in game AI helped to attract a number of AI/ML professors and funding from the provincial and federal governments throughout the early 2000s. Edmonton’s rise to AI prominence was cemented with DeepMind’s recent decision to locate here.

Sutton showed the following timeline to help illustrate Edmonton’s path to AI-science leadership:

Sutton then outlined some of the key advances that have happened in the field of artificial intelligence over the last seven years:

- IBM’s Watson beats the best human plays of Jeopardy! (2011)

- Deep neural networks greatly improve the state of the art in speech recognition, computer vision, and natural language processing (2012-)

- Self-driving cars becomes a plausible reality (2013-)

- DeepMind’s DQN learns to play Atari games at the human level, from pixels, with no game-specific knowledge (~2014)

- University of Alberta program solves Limit Poker (2015) and then defeats professional players at No-limit Poker (2017)

- DeepMind’s AlphaGo defeats legendary Go player Lee Sedol (2016) and world champion Ke Jie (2017), vastly improving over all previous programs

- DeepMind’s AlphaZero decisively defeats the world’s best programs in Go, chess, and shogi (Chinese chess), with no prior knowledge other than the rules of each game

Though the research taking place here in Edmonton and elsewhere has helped to make all of that possible, “the deep learning algorithms are essentially unchanged since the 1980s,” Sutton told the audience. The difference, is cheaper computation and larger datasets (which are enabled by cheaper computation). He showed a chart illustrating Ray Kurzweil’s Law of Accelerating Returns to make the point that it is the relentless decrease in the price of computing that has really made AI practical.

“AI is the core of a second industrial revolution,” Sutton told the crowd. If the first industrial revolution was about physical power, this one is all about computational power. As it gets cheaper, we use more of it. “AI is not like other sciences,” he explained. That’s because of Moore’s Law, the doubling of transistors in integrated circuits every two years or so. “It feels slow,” he remarked, and I found myself thinking that only in a room of tech entrepreneurs would you see so many nodding heads. “But it is inevitable.”

Given this context, Sutton had some things to say about the future of the field:

- “Methods that scale with computation are the future of AI,” he said. That means learning and search, and he specifically called out prediction learning as being scalable.

- “Current models are learned, but they don’t learn.” He cited speech recognition as an example of this.

- “General purpose methods are better than those that rely on human insight.”

- “Planning with a learned model of a limited domain” is a key challenge he sounded excited about.

- “The next big frontier is learning how the world works, truly understanding the world.”

- He spoke positively about “intelligence augmentation”, perhaps as a way to allay fears about strong AI.

Recognizing the room was largely full of entrepreneurs, Sutton finished his talk by declaring that “every company needs an AI strategy.”

I really enjoyed the talk and was happy to hear Sutton’s take on Edmonton and AI. It’s a story that more people should know about. You can find out more about Edmonton’s AI pedigree at Edmonton.AI, a community-driven group with the goal of creating 100 AI and ML companies and projects.

If you’re looking for more on AI to read, I recommend Wait But Why’s series: here is part 1 and part 2.

One thought on “Edmonton is a world leader in the science of artificial intelligence”